We can think of two reasons for using distributed machine learning: because you have to (so much data), or because you want to (hoping it will be faster). Only the first reason is good.

Distributed computation generally is hard, because it adds an additional layer of complexity and communication overhead. The ideal case is scaling linearly with the number of nodes; it rarely takes place. Emerging evidence shows that very often, one big machine, or even a laptop, outperforms a cluster.

We’ve compiled a few benchmarks and expert opinions on this matter.

Jure Leskovec

You may have heard of Mining of Massive Datasets - a Stanford course, an accompanying book and a MOOC. One of the authors is Jure Leskovec; he had a workshop at NIPS 2014. In Paul Mineiro’s words:

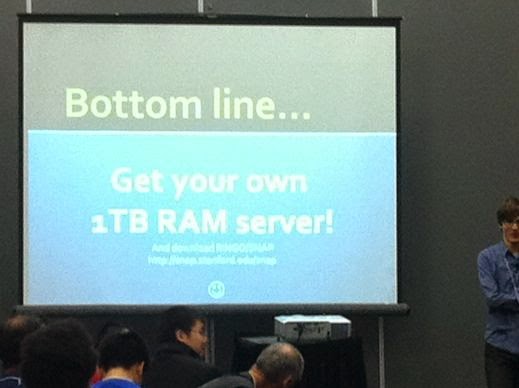

Since this is my day job, I’m of course paranoid that the need for distributed learning is diminishing as individual computing nodes (augmented with GPUs) become increasingly powerful. So I was ready for Jure Leskovec’s workshop talk. Here is a killer screenshot.

Jure said every grad student is his lab has one of these machines, and that almost every data set of interest fits in RAM. Contemplate that for a moment.

Andrew Ng

We found Andrew Ng’s recent Reddit AMA rather flat overall, but this part is interesting:

A lot of deep learning progress is driven by computational scale, and by data. For example, I think the bleeding edge of deep learning is shifting to HPC (high performance computing aka supercomputers) (…)

In 2008, we built I think the first CUDA/GPU deep learning implementation, and helped lead the field to use GPUs. In 2011, I founded and led the Google Deep Learning team (then called Google Brain) to use Google’s cloud to scale up deep learning; and this helped put it on industry’s radar. In 2013, Adam, Bryan Catanzaro and others built the first HPC-style deep learning system, and this helped drive scaling another 1-2 orders of magnitude.

Finally, today at Baidu, we have a system’s team that’s developing what we think is the next generation of deep learning systems, using HPC techniques. If you’re not familiar with HPC, it’s a very different set of tools/people/conferences/methods than cloud computing, and this is giving us another big boost in computation. We think it’s the combination of HPC and large amounts of data that’ll give us the next big increment in deep learning.

TLDR: From the cloud to HPC, from many nodes to one big machine.

C.J. Lin

C.J. Lin seems to be the principal author of Libsvm. From his 2014 slides on Challenges and Opportunities in Big-data Analytics:

What’s the percentage of applications that need big-data analytics? Not clear. Indeed some think the percentage is small (so they think big-data analytics is a hype).

Until recently, few universities or companies could access data center environments. They therefore think those big ones (e.g., Google) are doing big-data analytics for everything. In fact, the situation isn’t like that.

In my recent visit to a large company, their people did say that most analytics works are still done on one machine.

One of the main points of the talk is that distributed platforms are at an early stage of research. C.J. gives an example of matrix multiplication: a naive C++ implementation takes 204 seconds to run, Matlab - four seconds. That’s because Matlab calls optimized BLAS (Basic Linear Algebra Subroutines) library that was developed in 80’s-90’s.

A lot of engineering time and effort went into making those libraries and at this point they are very well optimized. More recently GPU computation proved worthwhile and the research is booming. There’s no equivalent for the distributed setting. Therefore, on one hand it’s reasonable to expect that it will get faster with time. On the other, fundamental difficulties mentioned in the beginning will still apply.

John Langford

For an overview of available options for dealing with big data, John Langford’s post on parallel machine learning approaches is a good introduction. Here’s the gist:

Dont do it for the sake of parallelization. Have some other real goal in mind that justifies the effort. Parallelization is both simple and subtle which makes it unrewarding unless you really need it. Strongly consider the programming complexity of approaches if you decide to proceed.

Benchmarks

Opinions are all good and well, but let’s see some numbers. All the benchmarks below contain results for Spark, which is currently the de-facto industry standard for cluster computing, at least in terms of mind share. Spark compares itself to Hadoop. Marketing-wise, it’s very sound: compared to Hadoop, everything looks very good, as you will see from the first benchmark below. History likes to repeat itself, and it appears that Spark has become a convenient benchmark in turn.

Graphlab and GraphChi

Graphlab is another quite popular name these days. Less known is Graphlab’s little spin-off, GraphChi. It’s meant for disk-based large-scale graph computation, or out-of-core learning.

GraphChi peformance benchmarks seem competitive. Basically, one Mac Mini vs 50 or 100 machines, similiar results. Too bad they only show GraphLab numbers for one machine with eight cores, it would be interesting (and potentially awkward) to see how GraphLab does when distributed.

More graph-processing software

Frank McSherry is a Microsoft researcher dealing with distributed systems. He’s a co-author of Naiad and has a blog post, Scalability! But at what COST?:

Here is the set-up: we took several recent graph-processing publications from the systems community, and compared the measurements they report to simple single-threaded implementations running on my work laptop (RIP).

It contains some numbers, so take a look. For now, the take-aways:

It is worth understanding which of these features are boons, and which are the tail wagging the dog. We go to EC2 because it is too expensive to meet the hardware requirements of these systems locally and fault-tolerance is only important because we have involved so many machines.

Lots of people struggle with the complexities of getting big data systems up and running, when they possibly shouldnt be using the systems in the first place. The data sets above are certainly not small (billions of edges), but still run just fine on a laptop. Much faster than the distributed systems, at least.

BidMach

BidMach is a machine learning library in Scala, optimized for GPUs. They tested it against Spark, GraphLab, Vowpal Wabbit and other software. The benchmarks speak for themselves. This far into the article, you know what to expect.

fbpca

Facebook released a fast Randomized PCA/SVD implementation. It’s written in Python and can handle large matrices. The timings include one comparison with Spark: 60 seconds on single machine server vs 50 seconds on a 68 machine cluster.

Vowpal Wabbit

Finally, some benchmarks of our own. We tried running VW in AllReduce mode, in multicore (32 cores) and cluster (8 nodes, each 32 cores) settings.

Vowpal Wabbit running on c3.8xlarge EC2 instance with 32 cores and 60GB RAM

Preparing a multicore run was relatively straightforward, you just need a shell script and data split into a number of chunks. For a cluster, however, one needs to copy chunked data and scripts to each machine, via S3 or otherwise. It gets complicated.

We benchmarked two datasets, KDD 2010b and Criteo.

On KDD10 learning a linear model from one pass over the data takes a few seconds, regardless of using one core, multiple cores, or a cluster.

Moreover, accuracy in parallel mode seems to be inversely correlated with a number of workers. This is the explanation from John Langford :

The data is spread over multiple nodes, meaning that the learner on each node has less data to work with. The models are combined by parameter averaging, which typically improves the average performance, but not as much as actually learning would do.

In short: there’s downside, but no upside.

The difference with Criteo is that we used nonlinear learning: quadratic features and n-grams. The timings:

single

real 7m15.542s

average loss = 0.457247

multicore

Net time taken by process = 40.644 seconds

average loss = 0.474857

cluster

Net time taken by process = 20.366 seconds

average loss = 0.492175

In this setting, using multicore looks like a good trade-off, but the log-loss score still takes a hit.

There’s one more catch: memory usage scales linearly with the number of workers. In other words, for using 32 cores you need 32 times more memory than for a single core. This forced us to reduce VW’s hash-table size a bit. With KDD10 it didn’t make a difference, 225 bits was enough. For Criteo, more would be better.