Research into recommender systems took off with the Netflix challenge, which started in 2006. For three years many contenders worked hard to achieve the prescribed error threshold. Finally, in 2009 Netflix awarded the prize, one million dollars.

Kaggle is a direct descendant of that formula. Define the problem, provide some data, choose the evaluation metric, optionally arrange for a prize, go. Works like a charm. However, there is a problem with how the incentives are set up. The motivation is very narrow: maximize the score to climb the leaderboard.

On one hand, it’s good - simple and transparent. On the other, in real world the score is just one of the pieces of a solution. Optimizing the score at any price does not favor simple, robust, production ready systems. We touched on this in an earlier article, Kaggle vs industry. From the horse’s mouth:

We evaluated some of the new methods offline but the additional accuracy gains that we measured did not seem to justify the engineering effort needed to bring them into a production environment.

If you’d like to learn more about the technology, here’s Edwin Chen’s summary of what went into winning the Netflix Prize. Digging into the papers may cause your head to spin, but a short version is that matrix factorization emerged as a winning technique.

Some improvements appeared in the recent years, notably incorporating side information. In case of movies, side information would be a genre, year, director, cast, and so on.

Another trend is to emphasize ranking explicitly, instead of minimizing RMSE on ratings (RMSE is a very lousy metric for recommendation). Ranking-based methods focus on finding most worthwhile items.

Finally, it’s often the case that there are no explicit ratings, just some observations: a user visited these pages, watched this, listened to that. Accordingly, methods have been developed for dealing with implicit feedback like that.

Recommender systems now

These days we have many sophisticated recommendation algorithms, but systems in the wild still don’t look too impressive. In particular, we haven’t seen any interactive movie recommenders. The reason is probably high computational cost, especially for sites with many users.

There is a 2013 video of Chris Bishop - yes, that one - showing a system where you drag a movie title to the left or to the right to indicate how much you like it, and the other movies on the screen rearrange to reflect the change.

Microsoft even produced a system called Matchbox capable of online training, with intent to use in a commercial service. Apparently it took the shape of Project Emporia, a news recommender based on Twitter data:

Project Emporia is a joint FUSE Labs/Microsoft Research project that uses Matchbox to provide a content-based recommender system for the real-time web. The key idea is to learn about the relevance of content snippets called tweets from explicit like/dislike feedback provided by users and bootstrapped from the content of Twitter lists.

Unfortunately, the project was retired. Matchbox code remains available in infer.net.

Interactivity

To understand why interactivity is important, let’s look at Chris Dixon’s idea maze for AI startups. Chris talks about Pareto principle, or 80/20 rule:

As a rule of thumb, youll spend a few months getting to 80% and something between a few years and eternity getting the last 20%. (…) At this point in the maze you have a choice. You can either 1) try to get the accuracy up to near 100%, or 2) build a product that is useful even though it is only partially accurate. You do this by building what I like to call a fault tolerant UX.

That top box represents a system which isn’t perfect, but offers instantanous feedback to the user.

You could also argue Google search itself is a fault tolerant UX: showing 10 links instead of going straight to the top result lets the human override the machine when the machine gets the ranking wrong. Building a fault tolerant UX (…) does mean a very different set of product requirements. In particular, latency is very important when you want the human and machine to work together.

With Google search, you enter a query and sometimes the first result works, sometimes the second or third, and sometimes you re-write the query to get better results. All in a matter of seconds.

In case of movies, it would be like: rate this, rate that, get some recommendations. Add a star here, subtract half-star there. Watch how this affects results, and explore. Where’s that interactivity, where’s an app you can tinker with in real time? Nowhere to be seen. That’s why we’ll build one.

MovieMood

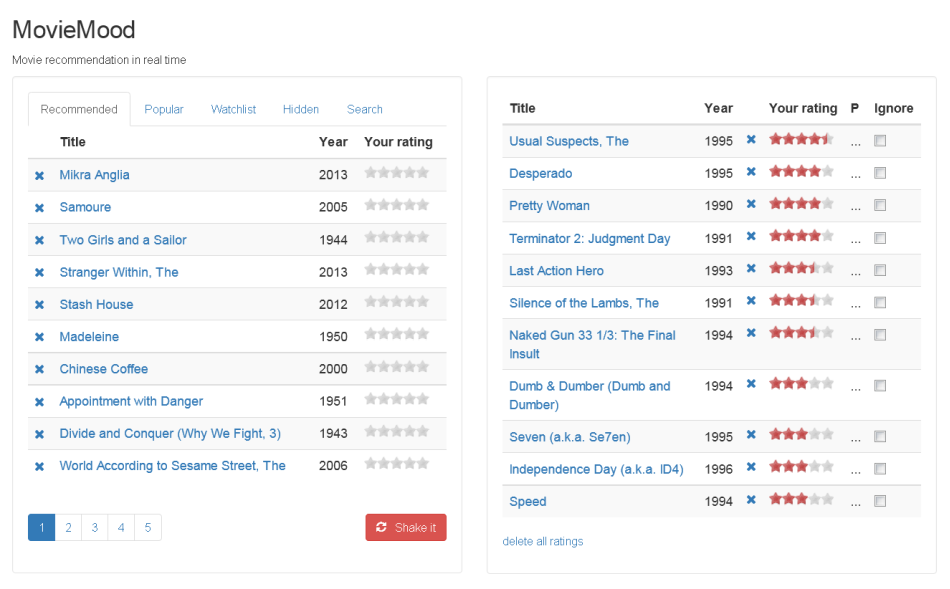

We already have the name for it: MovieMood, and a clear view of how it will work. The idea entails trading some of the technological sophistication for speed, while still being able to offer relevant recommendations. Here’s the preliminary UI design:

The panel on the left show unrated movies, the panel on the right - your ratings. On the left there are a few tabs, the most important of which is recommendations. When you add or modify a rating, recommendations will refresh automatically.