Good news, everyone! There’s a new contest on Kaggle - Facebook is looking for talent. They won’t pay, but just might interview.

This post is in a way a bonus for active readers because most visitors of fastml.com originally come from Kaggle forums. For this competition the forums are disabled to encourage own work. To honor this, we won’t publish any code. But own work doesn’t mean original work, and we wouldn’t want to reinvent the wheel, would we?

The contest differs substantially from a Kaggle stereotype, if there is such a thing, in three major ways:

- there’s no money prizes, as mentioned above

- it’s not a real world problem, but rather an assignment to screen job candidates (this has important consequences, described below)

- it’s not a typical machine learning project, but rather a broader AI exercise

You are given a graph of the internet, actually a snapshot of the graph for each of 15 time steps. You are also given a bunch of paths in this graph, which at one point in time were optimal, that is, cheapest. You are asked to predict whether all these paths will be optimal for the next five time steps.

After you have understood the task, it seems to boil down to a search. AI community has path-finding nailed down, so if you need to, you can easily learn about it - for example from a brilliant description of finding your way in Romania by Peter Norvig, or from the Berkeley AI course.

We will search for best alternative paths to see if a given one is optimal. Of course, first we’d better check the path - if it exists, and whether its cost is zero - when it’s zero, it’s optimal by definition. For each path we check its optimality in each time period and maybe get a vector like one of those:

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 (all ones, or all zeros)

1 1 1 1 1 1 1 1 0 0 0 0 0 0 0 (ones, then zeros, or vice versa)

0 0 0 0 0 1 0 0 0 0 0 1 0 0 0 (mostly zeros, or mostly ones)

0 1 1 0 0 1 1 0 0 0 1 0 1 0 0 (a mix)

What we do basically depends on how these vectors look in practice. If a significant part looks like the first two cases, at this point we could get sensible predictions for them, predict a mean for the rest and probably do OK, that is, beat the benchmark.

The latter cases, with intermingled zeros and ones, are tougher. Here’s when we might get into some machine learning. For example, a Markov chain seems a good fit. Markov chains are about state transitions in discrete time steps - exactly what we have here. The model is rather simple once you learn it.

In short, what we should get is a probability of going from not-optimal to optimal and a probability for going the other way (they needn’t sum to one). We can then put the right probability as a prediction for the first test period. Computing probabilities for the next periods is only slightly more involved.

Finally, it’s worth noting that the organizers are playing tricks with us to make things harder, while for a real problem they generally would try to make things as easy as possible. For example, look at these names - they all refer to the same entity:

CHTTL-TW ChungHwa Telecom. Co. Telecommunication .Lab

CHTTL-TW ChungHwa Telecom. Co. Telecommunication .Lba

CHTTL-TW ChungHwa Telecom. Co. Telecommunication .abL

CHTTL-TW ChungHwa Telecom. Co. Telecommunication CHTTL-TW Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication ChungHwa Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Co. Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication L.ba

CHTTL-TW ChungHwa Telecom. Co. Telecommunication La.b

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Lab. Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Lb.a

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Telecom. Lab.

CHTTL-TW ChungHwa Telecom. Co. Telecommunication Telecommunication Lab.

That’s only an excerpt - for this node there is at least 78 names in the first train file alone and at least 711 total. This means we better do some pre-processing to identify redundant names. Here’s a tip to get you started: consider clustering in Google Refine.

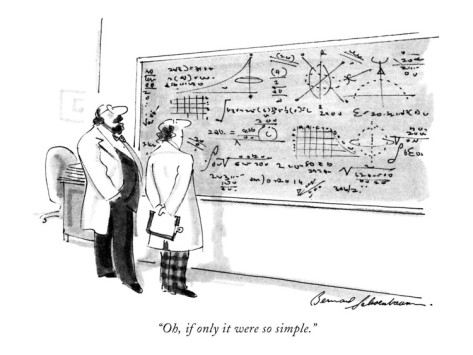

The cartoon comes from The New Yorker.