Recently at least two research teams made their pre-trained deep convolutional networks available, so you can classify your images right away. We’ll see how to go about it, with data from the Cats & Dogs competition at Kaggle as an example.

We’ll be using OverFeat, a classifier and feature extractor from the New York guys lead by Yann LeCun and Rob Fergus. The principal author, Pierre Sermanet, is currently first on the Dogs vs. Cats leaderboard.

The other available implementation we know of comes from Berkeley. It’s called Caffe and is a successor to decaf. Yangqing Jia, the main author of these, is also near the top of the leaderboard.

Both networks were trained on ImageNet, which is an image database organized according to the WordNet hierarchy. It was the ImageNet Large Scale Visual Recognition Challenge 2012 in which Alex Krizhevsky crushed the competition with his network. His error was 16%, the second best - 26%.

Data

The Kaggle competition features 25000 training images and 12500 testing images of dogs and cats. The images have different shapes and sizes and are quite large, as opposed to CIFAR-10, for example. The metric is accuracy.

A kitty from the training set

Since we have a pre-trained network, we can use the training set for validation, that is to see how well we are doing with classification.

OverFeat

When classifying, you give OverFeat an image and it outputs five (by default) most probable classes and a probability for each.

cd overfeat

./bin/linux/overfeat samples/bee.jpg

See the README for more details. Here’s what it thinks of the kitty above:

Egyptian cat 0.768662

tabby, tabby cat 0.152869

tiger cat 0.0663628

Siamese cat, Siamese 0.0011604

lynx, catamount 0.00105476

One small hurdle we need to clear is that these are ImageNet labels,way more detailed than simply “cat” and “dog”. There are at least 60 classes of cats and around 200 classes of dogs. It seems that you can get the labels from the WordNet. We just copy-and-pasted some from the ImageNet browser. The lists are on Github.

OverFeat has a script for batch classification, but it stops working when the number of images is large. Therefore we have written some simple Python code to classify images in a given directory with OverFeat.

There are two pre-trained networks to choose from, one faster and one more accurate, and we use the latter (-l switch, for “large”). Even though OverFeat employs multiple CPU cores, the process takes a while: on our reference laptop, it’s almost a second per image. By the way, that’s the version we compiled ourselves - it seems to use multicore to much fuller extent and run faster than pre-built binaries.

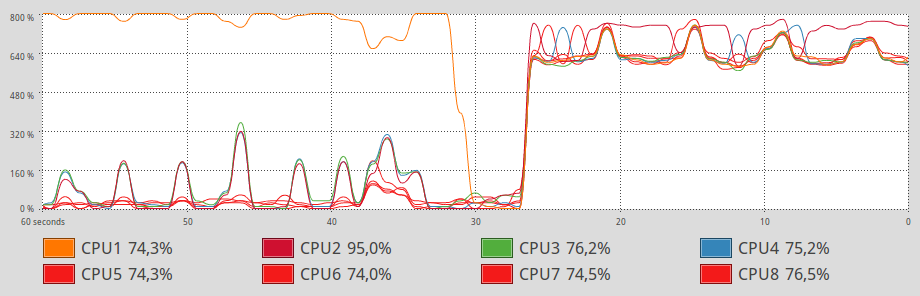

CPU usage - on the left pre-built, on the right custom

Now that we have predictions and lists of classes corresponding to cats and dogs, we can compute the accuracy using the compute_train_acc.py script. It turns out that OverFeat classifies 86.5% images correctly. We consider this a fair score.

Spot a cat in this image

For about 700 images it outputs predictions that are neither dog nor cat, but things like:

'black-footed ferret, ferret, Mustela nigripes', 'polecat, fitch, foulmart, foumart, Mustela putorius', 'skunk, polecat, wood pussy', 'weasel', 'hamster'

'weasel', 'polecat, fitch, foulmart, foumart, Mustela putorius', 'black-footed ferret, ferret, Mustela nigripes', 'mink', 'indri, indris, Indri indri, Indri brevicaudatus'

'wallet, billfold, notecase, pocketbook', 'cassette', 'purse', 'rock beauty, Holocanthus tricolor', 'ringlet, ringlet butterfly'

'lens cap, lens cover', 'carton', 'dumbbell', 'mouse, computer mouse', 'ping-pong ball'

Some misclassifications are dog-like and cat-like animals, some refer to things present in an image besides the animal. When we add wild canine (wolves, foxes, etc.) and musteline to the labels, we get 87.5%.

Doge, so obvious, very certain, much confidence 1.0

The code is available at GitHub.

See the next post for info on how to get even better results.