The most common form of cheating in first person shooter games is wall-hacking, or seeing enemy players through obstacles. We propose a solution to this problem building on a mechanism already used in some professional esports matches: taking random screenshots during gameplay.

If a game takes screenshots and uploads them to “the cloud”, either interested players or neural networks can look at them and detect cheating, so that people who deal with banning cheaters only have to handle a relatively small number of high-probability cases. We explain the details and address the objections and problems that might come up.

As far as we understand, most current anti-cheat solutions work much like an anti-virus program: they look for suspicious software on a player’s computer. For example, software that interferes with the screen, or maybe tries to access the GPU directly. There are multiple problems with this approach.

One is that there are legitimate programs that do the same thing. For example, there are programs to display frames per second on the screen, and overclocking and temperature/fan control applications access the GPU directly. If an anti-cheat denies access, it will break functionality of legitimate applications. If it allows access, the cheats will sneak in.

Another, and perhaps a more serious problem, is that ultimately it is the player who controls his computer, not the anti-cheat. Cheat makers will leverage this to circumvent anti-cheats. Antivirus programs work because they are aligned with the interests of computer owners. When the owner wants to cheat, there’s no such alignment for an anti-cheat.

Therefore, this antivirus-like approach is inherently inviable as a sole solution. We will now investigate another angle.

Just take screenshots

The most popular form of cheating, called wallhacks, involves seeing enemies through walls. By definition, it alters the screen visually. Because of this, we could take screenshots and check for cheating. To give you an idea how it works, let’s inspect how cheats may look like for a cheater. Here’s a screenshot from Counter Strike: Global Offensive.

A teammate on the right is marked with a triangle above his head. That’s what the game itself does. A cheat shows an enemy player as a blue silhouette in a box. Also, it highlights in white an object of interest - a smoke grenade.

Same match, same cheat. Three enemies visible, but for whatever reason only one has a silhouette. All three have boxes around them, though.

By the way, did you not notice the two boxes immediately? That’s because of the low image resolution and the lack of movement, but it also illustrates the fact that for the cheats to be useful, they have to be plainly visible to a cheater. If a cheat maker attempts to disguise them to evade detection, that lowers their usability. A wallhack can’t just be a single dot. It has to be clearly visible. And if it’s visible, it will be visible on a screenshot.

Can it work?

The success of the screenshot approach hinges on the game being able to take these screenshots reliably. If a malicious program wants to display something on the screen, but block it from appearing on a screenshot, is it able to hide things? We don’t know. Hopefully not.

However, even if it could, it’s not the end of the world. The question is, can you detect tampering with taking screenshots? Probably. And if you can, you can also just block the user from playing the game. Few, if any, legitimate applications would actively mess with screenshotting.

We assume that it’s possible to take screenshots at least somewhat reliably, because this approach is already used in some professional matches. It involves installing software called MOSS, which basically takes random screenshots from time to time. MOSS also does some other things, notably verifies integrity of its files. If it’s good enough for pro esports matches, then maybe this solution is indeed viable.

The limitation of MOSS is that you have to do everything by hand. Players upload their files manually, and if you are interested in viewing the screenshots, you download a ZIP file with them.

UPDATE: December 2021

From the MOSS FAQ page it would seem that you can only take screenshots reliably on Windows 10. Well, good news, everyone: over 80% of Windows users already use Windows 10, and its share will only grow.

What about the remaining 20%? If a company decides on making an anticheat based on taking screenshots, it could divide the players into those who can run it on Windows 10 and the rest. That would result in two trust tiers. Players could be matched only with people from the same trust tier. If someone in the lower tier encounters cheaters regularly, updating to Windows 10 would be a small price for solving the problem.

Automating the process

Imagine that a game takes screenshots and uploads them to a server automatically. It’s not rocket science, it’s very easy, as long as you make sure that nothing tampers with the upload. Sending images consumes little network bandwidth, too.

An objection that might come up here is about privacy. As we see it, a game publisher just needs to do two things. First, ensure they are covered legally, by mentioning screenshot uploads in the terms of use. Second, only take screenshots of the game itself, not the desktop or other applications. This way, there is no sensitive information in the screenshot. If a third party views them, they can see some usernames, and possibly some team chat content, and that’s it.

Now, who is to check millions of these screenshots for evidence of cheating?

Involving the community

There are many people inherently motivated to do just that: players who suspect cheating on the other team. It is possible to set up a system in which they do the work. Just enable people to take matters into their own hands.

This general idea has already been implemented by Valve in their Overwatch system for CS GO. We talked about Overwatch at length in the previous article. Overwatch has its problems: it falls short in providing motivation, and it’s relatively time-consuming and difficult to detect cheaters by watching match replays. Let’s try to design something better.

The process would be as follows. During a match, the game takes screenshots and uploads them automatically, maybe even between the rounds. After the match you log in to a website where you can see the screenshots from all the players you were in the match with. If you see any evidence of cheating, you click to report.

This way, employees of the game company responsible for reviewing stuff would only deal with these manual reports provided by the community, meaning that most of the work of detecting cheaters has already been done. It’s like Mechanical Turk, but the people doing work are motivated by their own interest. Win/win.

There will be bogus reports, of course. To cope with this, the final reviewers could label a report as either good or bad, and the reports from users with low scores would end up at the bottom of the queue. If you submit a shit report once, twice, thrice, nobody will look at the fourth one. Reviewers would first look at reports from fresh users, submitting for the first or second time, and at reports from known good users.

Building an infrastructure required for this should be rather simple. Game companies already have websites for doing similar things - logging in, reporting issues. The only new element is to upload the screenshots and show them to people.

We think this system is good because it’s simple, relatively low-tech, and should be reliable. It leaves the hard work to people who will gladly do it.

Neural networks for looking at images

How about using machine learning to automate things further? The obvious move would be to employ convolutional neural networks. We would like to have a network which could classify a screenshot from the game and tell us the probability that there are wallhacks visible in it.

The source of negative examples is unlimited: just take screenshots from normal matches. To get positive examples, one would obtain and install cheats, then activate them and play. We are sure that people working on anti-cheats have plenty of different cheats available.

What about the new, yet unknown cheats? As wallhacks go, they work in a similar way, but differ in details. Because of this, we re-state the task here as to detect foreign visual artifacts in a game. Let’s note that there are legitimate “foreign artifacts”, for example FPS (frames per second) counters, and other kinds of overlays. For example, in Rainbow Six: Siege, overlays showing enhanced stats are popular. These things would count as negative examples in training, so that the network could learn to recognize and ignore them.

At test time, when producing predictions, the neural network could run locally, on a gamer’s computer, too. It might be a simplified version of the model to pre-screen the images before sending them. A major disadvantage of this is the risk that cheat developers could get hold of the model, then use it to check how it reacts to their cheats and potentially develop some able to evade detection. It is known that you can often trick a CNN with an adversarial example. Not having access to the model makes it harder.

It is entirely possible that some game companies already use this approach for detecting cheaters. It is very clear, however, that at least some do not.

Challenges

Some wallhacks look foreign, so they are easy to recognize automatically. However, many games highlight teammates during matches, and highlight enemies in match replays or when spectating. If a cheat uses these in-built mechanisms, it looks like part of the game and that makes it harder for a neural network to detect it.

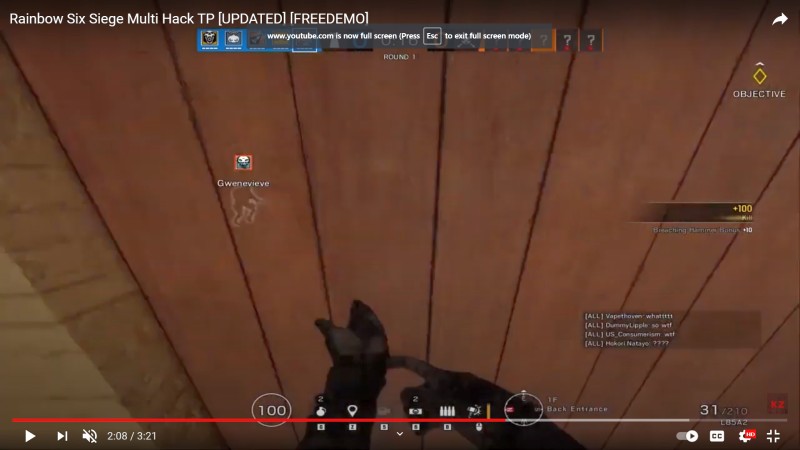

As an example, let’s look at Rainbow Six: Siege, a popular tactical team-based first person shooter. This game currently has a serious problem with cheating. If you watch streamers playing on Twitch, they deal with cheaters constantly.

The game itself shows teammates through walls - here, one on the left, one above the gun sights. There is an outline, a username and a symbol. All team symbols appear top left, so you can tell that symbols above outlines belong to teammates.

This is a cheat. A player from the enemy team is visible through a wall. His symbol has a red border. This cheat apparently abuses the built-in game mechanism to show players that shouldn’t be shown. In some circumstances the enemies become legitimately visible through walls like that. More on this below.

Here, the same cheat, visible objects of interest are highlighted, including one enemy player. This would be very easy to detect. Additionally, two enemy players are visible through a wall.

Another cheat, this time objects of interest are highlighted in turquoise. Notice how on the right side, there’s no outline, but a player behind a wall is marked with a symbol and a red username.

Dealing with challenges

Due to the challenges described above, detecting cheats automatically from screenshots goes from “pretty easy” to “somewhat difficult”. It is still very easy for humans, so we would recommend building a system involving the community, as described earlier in this article.

How would we deal with this using machine learning? We envision two lines of defense. First, detect foreign artifacts, everything that does not belong, like those purple/turquoise highlights. Second, go into detail, checking for abuse of built-in game mechanisms.

Using the Siege example, check the symbols visible on screen. Are these teammates or enemies?

Teammate symbols are always visible in a row top left of the screen. You could train a network to read the symbols from there, but it’s unnecessary, since everybody (server, clients) has this information. A network could detect all operator symbols in a screenshot - that’s a rather trivial task. Then you have a list of teammate operators and a list of detected operators. Compare them. If there’s an enemy symbol, it shouldn’t be shown, unless it’s a special case.

Finally, check for those special cases. Here’s an example of all enemies being marked as a result of interrogation (you don’t want to know).

The thing is, only one operator in the game can do this. It also happens rarely. If this operator’s symbol is not in the teammate list, then we have a cheater. If any percentage of screenshots feature this, even with the operator in play, we have a cheater.

It gets a bit complicated like this, but each step you get closer and closer to the goal. If you eliminate 80% of cheats, that’s a big success. If it’s 90% or 95%, that’s the problem solved.

Mini-map cheats

Many games, particularly those without much vertical complexity, like Counter Strike and Valorant, have mini maps. These maps show the positions of the teammates. If any of your teammates sees an enemy in game, the enemy will also show up on the map.

Showing all enemies all the time on a mini map makes for a very good cheat. It still provides all the information a cheater needs, and at the same time is much more difficult to detect. When using wallhacks, cheaters look at enemies through walls, and it is often very obvious when watching match replays. There’s no such behaviour with mini map hacks.

Fortunately, a solution for this is simple, at least in concept. Let a neural network, or some other kind of a program, count the enemies visible on the mini map. If they are all consistently visible, you have a cheater.

Since mini maps are designed to be legible, counting objects in them should be very easy for a neural network. If a CNN can count cars in this image, including occluded ones, it can count a few non-overlapping red dots on a mini map.

These two examples come from tensorflow_object_counting_api Github page.

Game developers working hard and Zygmunt telling them how to make things easier

In fact, there’s a mobile application for counting things, called CountThings, if you’d like to have things counted. It shows that machine learning can be used for that purpose.

Aimbots

There are other forms of cheating. Notably, aimbots. In shooter games, the basic skill is to click things on screen quickly and with great precision. Aimbots provide assistance with this. In contrast to wallhacks, they can’t be seen on screen - there’s nothing to see. Also, advanced aimbots are programmed so that the movement of the crosshair looks somewhat natural. It’s not a jerky jump, it’s as if a skilled player saw an enemy and reacted.

In our opinion, this makes aimbots difficult to detect by methods other than the traditional antivirus-like way. One angle of attack would be to observe reaction times. Humans have some inherent delay in responding to stimuli. Gifted/trained gamers can react by clicking in about 150-200ms. Go test yourself here or here. Apparently almost nobody can click in less than 125ms. Therefore, if a game observed too-quick reactions to sudden events consistently over and over again, it would be very suspicious.

Fortunately, aimbots are a small problem compared to wallhacks. Deal with wallhacks and you’re 80% there. That is, if you’ve dealt with…

Spinbots

Sometimes, the bar is lower. In CS GO, there are so-called spinbots. A player using a spinbot is usually looking straight down all the time, only to flick and shoot an enemy with inhuman speed and precision.

i enjoyed my stay in low trust pic.twitter.com/XwIALWVLpZ

— ohnePixel (@ohnePixel) April 29, 2021

These should be trivial to detect due to unusual and very alien movement patterns (see below for discussion on mouse/controller movements). Yet people meet spinbotters all the time in matches. It shows how bad the cheating situation still is in CS GO.

Mouse and keyboard on console

Another form of cheating is to use a mouse and keyboard (MnK) on a console. Console players normally use a controller to play, but there are adapters which allow connecting MnK. This provides a big competitive advantage, because it is way easier to aim with a mouse than with a controller.

In our opinion, console makers should just accept the reality and allow using MnK. To keep the playing field level, this would require the games to have one division for controller players and another for MnK players.

Yet people would probably still cheat by using MnK in a controller division. Which brings us to the discussion on how to tell MnK from a controller automatically. Good news, everyone! Controller movements are very different from mouse movements. For example, you can draw a neat circle or an ellipse with a mouse easily, you can’t with a controller. Go watch some controller gameplay and mouse gameplay on youtube to get an idea on how they differ.

Since the movements are distinctly different, it should be easy to classify them correctly with machine learning, specifically some kind of a model for time series. Again, the training examples are trivial to get. The model could be built into the game itself (with reservations mentioned above), or the game could send samples to the server for classification.

Once a game can recognize MnK players, we would recommend matching them with other MnK players, so that everybody has a fair chance. Since Mnk players are presumably a minority, this would result in longer waiting times for them. Want matches quicker? Don’t cheat.

Game company perspective

In the words of Microsoft CEO, Satya Nadella, gaming is the most expansive category in the entertainment industry. The market is massive already and growing steadily. We believe that from a game company perspective, if you have a competitive online game that people want to play, cheaters are always #1 problem. Cheating is like cancer. When there’s rampant cheating, nothing else matters.

To illustrate this, let’s study the case of Counter Strike and Valorant. CS GO is still the most popular online competitive shooter, but it has a big problem with cheating. This problem, and a few others, was leveraged by Riot Games to make a competing game, Valorant.

Valorant was explicitly marketed to CS GO players. Riot promised to make a game similar to CS GO, but without CS GO problems. They mostly delivered, and a year after release Valorant is a wild success. On Twitch, now it’s only people heavily invested in Counter Strike who stream it. All the casual streamers and much of the old guard play Valorant. CS GO remains popular, but the tide is shifting.

It can be attributed in large part to Riot’s success in curbing cheaters. The anti-cheat in Valorant is perceived as intrusive, but also effective. Whether it really is, we consider it hard to tell because at the moment there’s no way to check (particularly, there’s no match replay), so it’s all based on gut feeling. Valorant wallhacks appeared on Youtube about a week after the beta release, so the situation is probably not as rosy as it might seem.

However, it’s way better than in Counter Strike, and that’s what matters. Valorant passes the cheating test, Counter Strike continues to fail miserably. In effect, gamers’ money which used to flow to Valve now flows to Riot Games, too.

Conclusion

If you have friends working in the gaming industry, please point them to this article. We would be happy to assist in making the world a better place, and getting rid of cheaters certainly qualifies as such.