Counter Strike is the most popular and long-lived first person shooter game ever. As great as it can be, it has its problems. The most important problem concerns cheating. This problem became a lot worse lately. In this article, we offer a few relatively simple solutions.

Revised and updated on 2020-01-03. MERRY NEW YEAR!

The game is a tactical team-based shooter, meaning that two teams compete on a map to achieve an objective. Usually there are five people in a team. The terrorists want to plant the bomb in one of the two designated places. The counterterrorists want to prevent this, or if the bomb is planted, to defuse it. Of course, either team can achieve a round victory by just killing everybody from the other team.

Cheating, or hacking, as it often called in this game, takes two basic forms. One of them is knowing where the opponents are, most often seeing them through the walls (hence wallhacking). The other one is aim assistance. If you’ve played any single-player shooting games, you know that sometimes, on lower difficulty settings, the game helps you with aiming. You don’t need to be precise, just aim at the general direction of the enemy. In multiplayer games, with humans on both sides, this wouldn’t work. But cheaters use software to achieve the same effect. Both these forms introduce a profound unfair advantage and ruin the experience for other players.

Countermeasures

How does Valve detect cheaters? There are two ways. Unfortunately, in practice both of them mostly don’t work at all.

The first line of defense is VAC, which stands for Valve Anti-Cheat. It’s a software that runs on a player’s computer and detects some cheats (like an antivirus detects viruses). More on this below.

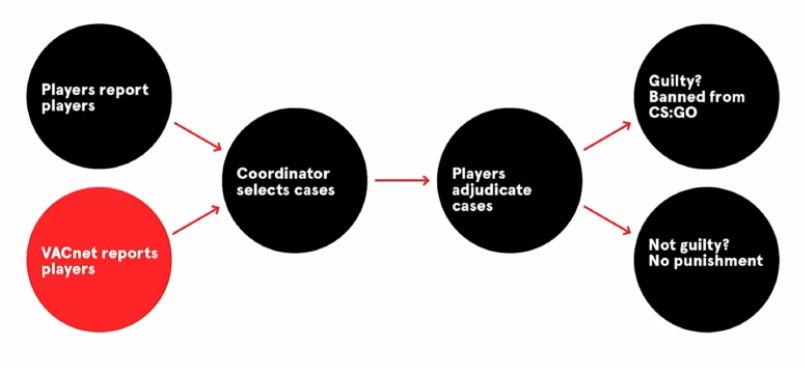

The second way uses community’s help. This system goes by the name Overwatch (there is an unrelated game of the same title). People report suspected cheaters and then good players review recorded gameplay demos of suspects and issue a verdict. Then it’s back to Valve’s black box and some people get banned in the end. You can see how it works from the judging player’s perspective in this WarOwl’s video.

Valve uses Overwatch to get labelled data for their machine learning system, called VACnet. The idea is to learn to detect cheaters, and based on that, automatically select players for review in Overwatch. The diagrams come from a presentation on Using Deep Learning to Combat Cheating in CSGO, in which John McDonald talks at length about the topics that we skim here.

The presenter would have you believe that VacNet has been a great success. In a sense, it has. If you watch the video, you can see that the conviction rate in Overwatch went waaaay up after introduction of VACnet in early 2017. This probably nudged Valve to make the game free-to-play in late 2018. They must have known that it would encourage cheating, but wanted to believe that VACnet would cope.

It wouldn’t. The cheaters are still there. A LOT OF THEM.

Better labels

In Overwatch, the verdicts are formulated on a “beyond reasonable doubt” basis. This means that there should be few false positives, but unfortunately many false negatives. In other words, the precision is high, but the recall is low.

This is a problem, because a system constructed like that can only detect blatant cheaters. It is certainly good to ban them, but it doesn’t address the real issue.

Moreover, the underlying reality is that more often than not, it’s difficult to be 100% sure that someone is cheating just by viewing his gameplay. For a smart cheater (an oxymoron, we know) it is actually quite easy to stay unbanned.

For example, cheaters can toggle their hacks on and off. When someone reviews the demo, one shot may look suspicious. Then another one. Then the cheater toggles off, and is back to his mediocre self. A couple of poor plays and the reviewer comes to a conclusion that the previous good shots were just lucky. Everybody gets lucky from time to time, you know.

Still, a person with some experience develops a nose for cheaters. While you cannot be 100% sure in some cases, you can give an estimate. For example “probably not cheating”, or “might be cheating”, or “pretty sure he’s cheating”. These words express probability, and in our opinion it would be very beneficial if Valve introduced probabilistic, percentage based verdicts into Overwatch. Just add a slider, or a list with 0%, 10%, …, 90%, 100% to select from.

Note that these will work just fine for machine learning models. Log loss, or cross-entropy, the basic loss function (and evaluation metric) used in classification, should work with probabilistic labels. As far as the math goes, percentages are as good as zeros and ones. One could also try training a regression model.

The reason for the current all-or-nothing setup is pretty obvious: they don’t want to ban innocent people. It’s similar to the situation with spam filtering - better to let a few spam messages slip into the inbox than to put a legitimate mail into the spam folder.

The Trust Factor

What use are probabilistic estimates then? Well, there is another system except the banning one. It is called the Trust Factor. Valve won’t disclose anything about it except the basics. Each player is assigned a trust score, and players with similar scores get matched together. The idea behind it is that cheaters play with other cheaters in cheaters’ hell, while the upstanding citizens enjoy each others’ company in normal games.

It’s a good idea, but in reality this system just doesn’t seem to work. It kind-of worked throughout this year, for some people better, for some worse, but recently the situation went really bad and a normal person gets matched with cheaters all the time.

An easy possible solution is to introduce and use probabilistic estimates. Whatever features and algorithms Valve has in their system, the “percent sure” from Overwatch would add a strong signal.

Anticheat software

It is worth noting again that in general, it is difficult to recognize a cheater just from gameplay, whether a human is watching, or a machine. For some kinds of cheats, it borders on impossible.

There is a more reliable solution. It is called anticheat, and it’s similar to an antivirus. The idea is that a program is running on a player’s computer and it watches for suspicious software interfacing with the game.

Valve has an anti-cheat. The problem is, it doesn’t work. A few times a year there are so called VAC waves, when Valve updates the software to recognize new cheats. Some cheaters get banned (but not very many), some get scared to cheat for a few days. Then it’s all back to old ways.

There are third party servers you can play on instead of Valve’s servers, noticeably run by FaceIt. Faceit uses an anticheat and in practice, it works pretty well and is accepted in the community.

So why does Valve have such a bad anticheat? This is one of those great questions that remain unanswered for now. Some people speculate that Valve doesn’t want to introduce an intrusive program which could potentially spy on its user. We don’t know what it means. The software is already there running 24/7, basically. Talking about “more intrusive” sounds like splitting hairs.

Even simpler measures

Let us forget the rocket science for a minute. No machine learning, no anticheats. Just simple economics. Currently, if a cheater gets banned, it costs him $0 to start again. The game is free to play, just a new Steam account and he’s good to go.

The game comes in two flavours: normal and Prime. To get Prime, you either have to play a lot (like for two months), or pay $15. Earlier, it was enough to provide a phone number. A mobile starter kit can be had for less than $5. Not much of a deterrent. $15 is better, but one can buy a prime Steam account for about $3, maybe even cheaper if one knows where to look.

UPDATE 2021-06-04

Three and a half years after going free-to-play, and one and a half years after publication of this article, Valve has removed the free path to Prime. This means that people won’t be able to farm Prime updates using bots, so these $3 accounts will no longer be available. It is a step in the right direction, although the phrase too little, too late comes to mind.

this doesn't fix all the problems with MM, but it's a great step in the right direction. I hope it isn't too little too late to save CS:GO, nobody really plays MM anymore in my region, good players play 3rd party, and everyone else went to Valorant

— WarOwl (@TheWarOwl) June 4, 2021

/UPDATE

In some other games, there are hardware bans. Each computer has a fingerprint. After its user is found guilty of cheating, the computer gets banned permanently. Want to cheat again? Buy a new one.

If you don’t want such drastic solutions, there’s another, very simple one: make the game paid again. The cost of $20-30 would probably work a little better than $3. But fewer people will play then, won’t they?

Let’s look at the chart of a number of players. In the bottom, long-term plot, one can notice that in summer 2018 there was a dip, which probably influenced the decision to make the game free in December 2018.

Did it help? It did, a bit, but there’s not that much difference in the big picture, and the price has been hefty. The game experience went downhill, especially for new players, who get to meet all the cheaters right away, and they don’t even know it. That certainly isn’t conducive to building a players base.

Interestingly, on the one-year chart the number peaked on October 14th, reaching an all time high since the game went free to play. Since then, it has dropped sharply, currently at the low summer level from earlier this year. It has been a particularly bad experience these past few weeks, and apparently people are dropping out like flies*. Surprise, surprise. The very underwhelming celebration of 20 years of CS in October didn’t help either.

*To be fair, there may be other factors, like for example a release of Call of Duty on October 25th.

And by the way, that the game is free to play doesn’t mean it’s charity on Valve’s part. People pay for stuff, notably for weapon skins. These start at few cents, but if you want, you can easily spend $1000 on a single item like a knife. For most people, the only hope of getting such an accessory is to gamble by opening a gazillon cases. Cases contain random items, most often just crap. Each opening is around $5.

The cheater’s perspective

A cheater who wants to remain unbanned has two things to worry about: VAC and Overwatch.

As regards VAC, it’s smooth sailing. Make a new account for free, use a cheat and see if you get banned. You did? Oh my! Download another cheat, make a new account, and test again. Repeat as necessary.

Now, Steam will get updated from time to time. It will tell you when it’s about to, so just make sure to re-test the cheat after the update.

This again shows how making the game free to play was a very bad move. It undermined VAC to such an extent that now it’s basically worthless.

One way to offset it would be to delay bans for a few days to a month. This would make testing cheats more difficult and time consuming. It is possible that Valve already does it, who knows.

Delaying would also provide additional training data to a model learning from gameplay features, hopefully generalizing well to previously unseen cheats.

Back to a cheater’s sad life. Of course people will notice and report you. Then, possibly, someone reviews your demo and will be asked if he’s 100% sure that you cheated. Solution? Just pretend to check some corners while playing with wallhacks, or toggle them on and off. This will introduce some doubt into the reviewer’s mind and you should be good to go.

To remedy this, Valve should introduce probabilistic verdicts into Overwatch, as described above.

Conclusion

Why doesn’t Valve do SOMETHING to solve the worst problem in its most popular and long-lived game? That is another of those grand unanswered questions, the daddy of them all.

The meme in the community is that Valve hates CS GO. Another possible explanation, offering a glimmer of hope, is that they are working on a new version of Conter Strike, as the current engine is dated. This is probably just wishful thinking, though.